Is AI a Goldmine

or a Landmine

For Athlete

Brands?

You’re a sports fan in 2028.

You log into your fitness app and a chat pops up — it’s an AI workout assistant that looks and talks like your favorite NBA star. You input your training goals for this week and get back a personalized diet and training plan based on your needs.

You pop on your VR headset and fire up Madden 2028 — you scroll through a roster of thousands of hyper-realistic AI-generated players from throughout time and create your team. You head onto the field.

Later, you turn on SportsCenter and watch as Stephen A. Smith debates an AI-generated young Charles Barkley. They’re arguing over who’s wearing the sharper suit, which you can buy using your Apple Cash directly from your headset.

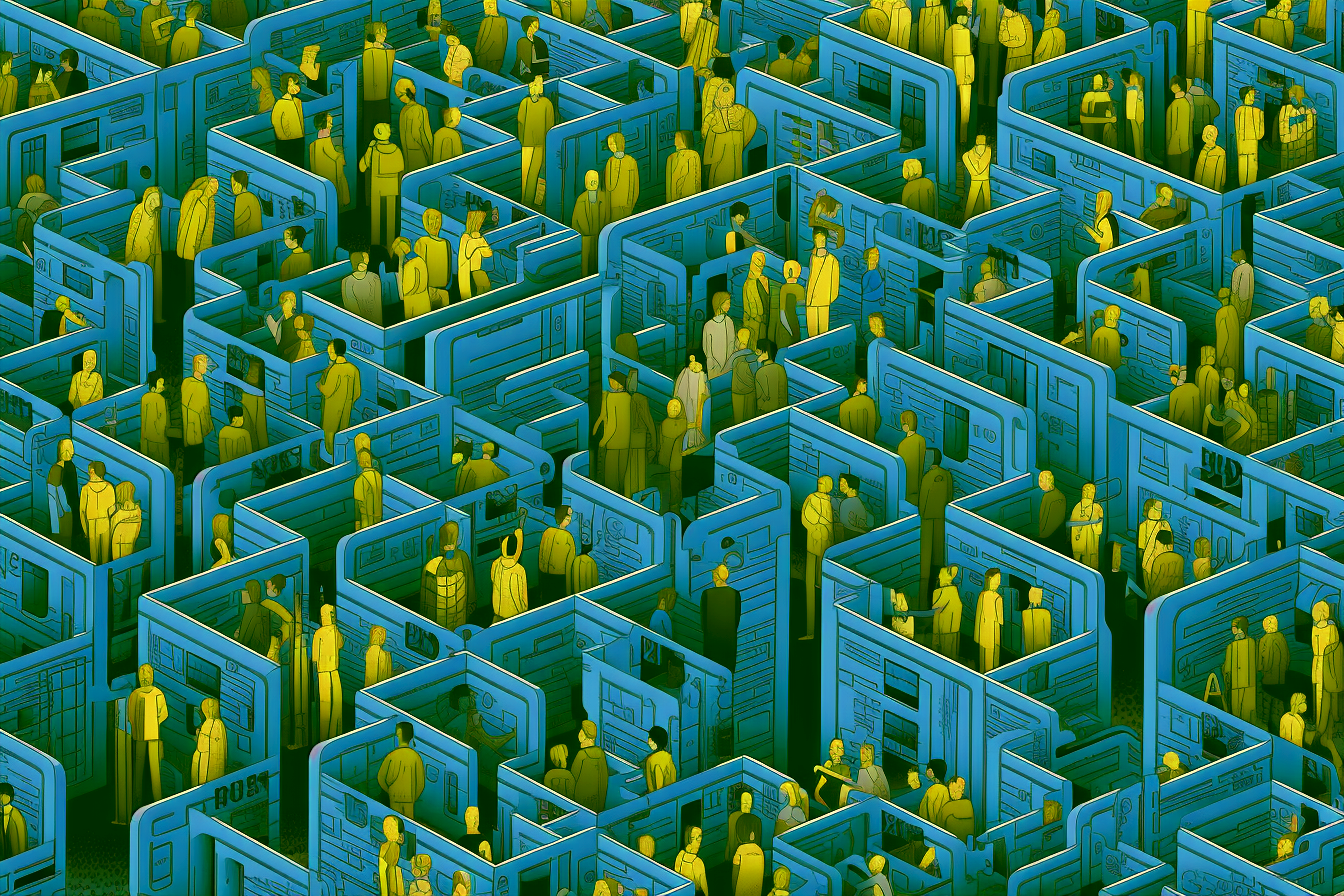

AI – with its seemingly endless ability to create, analyze, and mimic – is transforming industries at a breakneck pace. Athletes are uniquely positioned to capitalize on this tech to reimagine sports branding.

Athletes, bound by the constraints of time and resources, now have the potential to leverage their likeness and scale their brands in innovative ways to engage fans.

AI also poses considerable risk. It’s uncharted territory with pitfalls that range from unauthorized deepfakes to AI-generated communication that fans find inauthentic.

This new age might be a goldmine for athletes, but they’ll have to avoid the landmines first.

AI’s potential role in enhancing personal branding in sports cannot be overstated.

Already, AI-driven analytics can synthesize vast amounts of data about fans’ behaviors and preferences, allowing athletes to tailor their brands to better target individuals.

Personalized fan experiences, from AI-curated content to virtual meet-and-greets, are poised to redefine the fan-athlete dynamic, creating a stronger and more direct connection. Generative AI models could be trained to replicate an athlete’s voice and tone. These models could then be used to create unique content in the style of the athlete, which could then be targeted at fans who are most interested in the topic.

The economies of scale enabled by automation, including aspects of content creation, also enable athletes with smaller followings to boost their brands and reach more fans. This shifts the power dynamics within the sports industry, placing control back into the hands of the athletes.

But these tools are not without risk; there is also the potential for AI to erode the value of an athlete’s brand. Automated content, while efficient, lacks the genuine human touch and authenticity that fans often seek. The uniformity resulting from AI processes might also lead to diluted brand identity, reducing differentiation and competitive edge.

Equally, the potential for misuse is significant.”

Deepfakes, AI-manipulated images or videos that often appear authentic, present a risk even today. As the technology improves – and the ease of creating convincing synthetic media rapidly increases – public figures will have to reckon with the potential consequences of false narratives being planted by fake versions of themselves. The current legal framework, predominantly designed for a pre-AI era, struggles to tackle these novel challenges.

Image rights, the linchpin of an athlete’s brand, face an unprecedented threat with the advent of AI. The ability of AI to create lifelike digital personas of athletes, and use them in a myriad of contexts, raises complex issues surrounding consent and ownership. An entirely new framework for licensing athlete likenesses – and for objecting to the use of unlicensed, AI-generated likenesses – is needed.

The Threat to Brand Ownership and Authenticity

Need for Regulation

Contracts and the legal framework need to evolve to address the challenges posed by AI, protecting athletes’ image rights and preventing misuse. Transparency and ethical considerations must guide the deployment of AI in sports branding, ensuring it enhances rather than detracts from the athlete’s brand value.

The emerging age of AI offers a wealth of opportunity and a chance to redefine the athlete-fan relationship. In the delicate balance between scaling and protecting an athlete’s brand, AI represents both a goldmine and a landmine. As we chart this new terrain, the challenge lies in unlocking the promise of AI while safeguarding against its perils.

Are these fears going to stifle creativity, innovation, and commercial growth? Humans have proven themselves eager to jump at hyped, if unproven tech, that promises financial gain. Just look at crypto! Will athletes fall into the same trap and pivot too quickly to using AI to redefine their personal brands? Maybe? Probably.

Do you agree with this?

Do you disagree or have a completely different perspective?

We’d love to know

AI Goldmines and Landmines

An NBA Player Surveys the AI Opportunity

Recently I fell down a rabbit hole thinking about the advent potential of AI-generated avatars and conversational bots and how they might be able to help revolutionize fan interaction.

Before this technological era, a person couldn’t be in two places at once or appear to come back from the dead. But this technology — which seemed so novel and impossible to fathom — is now right at our fingertips.

Did Tom Brady Really Say That?

Athletes, bound by the constraints of time and resources, now have the potential to leverage their likeness and scale their brands in innovative ways to engage fan.

But AI also poses considerable risk. It’s uncharted territory with pitfalls that range from unauthorized deepfakes to AI-generated communication that fans find inauthentic.